Latest Developments for Safe and Reliable Railways

Hitachi adopted virtualization for a traffic management system for the first time when it undertook the integration of the systems for the Tohoku Main Line and Senseki Line. Along with hardware consolidation, the systems were integrated in a way that satisfied the customer’s requirements, including the use of multiple guests on the virtualization platform to enable an earlier commencement of operation on the Senseki Line so as to minimize the risks associated with switching to the new system, and the provision of a training environment in a timely manner with respect to the development schedule. The realtime virtualization platform adopted for the project delivered the realtime control performance, high availability, and ease of maintenance demanded of a traffic management system. Configuring the system on a virtualization platform also helps minimize life cycle costs by making it possible to reduce the amount of development work needed during future equipment upgrades.

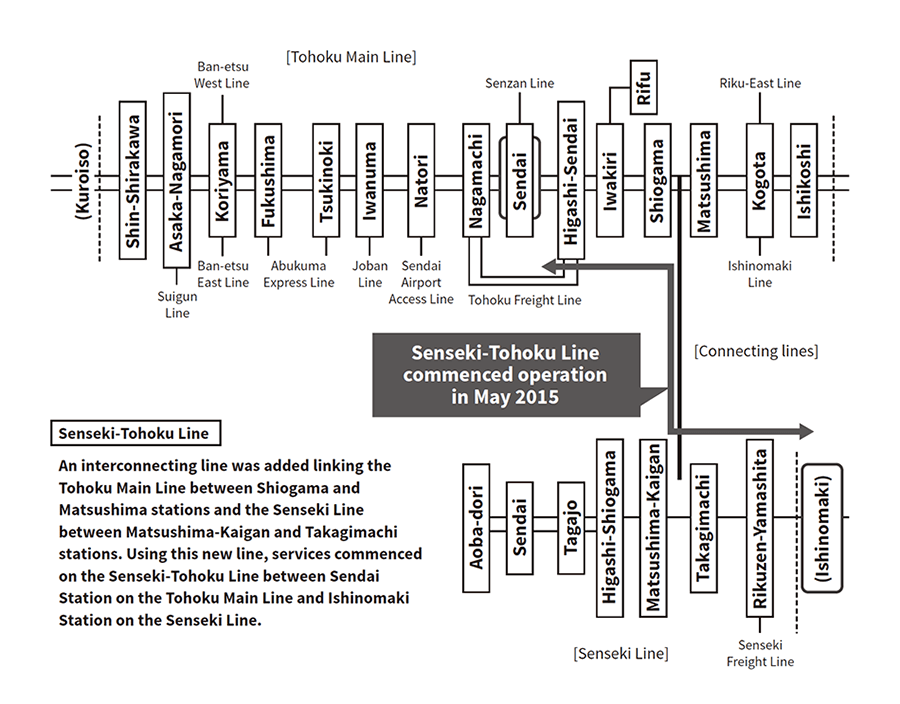

The Tohoku Main Line (from Kuroiso to Ishikoshi) and the Senseki Line (from Aoba-dori to Rikuzen-Yamashita) each had their own control centers and East Japan Railway Company had established separate traffic management systems for the lines, with no facility for the sharing of track.

An interconnecting line between the Tohoku Main Line and Senseki Line was completed in May 2015 and the new Senseki-Tohoku Line introduced that runs from Sendai Station on the Tohoku Main Line to Ishinomaki Station on the Senseki Line. As the Senseki Line runs on direct current (DC) electric power whereas the Tohoku Main Line runs on alternating current (AC), the service was provided using HB-E210 series hybrid diesel rolling stock. As the traffic management systems being independent of each other imposed restrictions that obstructed the adoption of fully automatic control, including the inability of the previous systems to share train number information, trains needed to halt on the connecting line and the control center had to use manual procedures for some actions.

With both systems coming due for replacement, a new traffic management system that integrated operation on the Tohoku Main Line and Senseki Line was installed to achieve time savings from use of passage control and full automation of the connecting line (see Figure 1). As the traffic management system needed to provide scope for expansion as well as sustainable long-term operation with high reliability, the new system was implemented using information and control servers running on a realtime virtualization platform with enhanced realtime control performance for programmed route control (PRC) so as to reduce life cycle cost, including the cost of future upgrades.

This article describes the features of the realtime virtualization platform and its use for the traffic management system.

Figure 1—System Scope The Tohoku Main Line and Senseki Line each had their own traffic management system. Train services that operated under both systems commenced in May 2015 with the opening of the Senseki-Tohoku Line.

The Tohoku Main Line and Senseki Line each had their own traffic management system. Train services that operated under both systems commenced in May 2015 with the opening of the Senseki-Tohoku Line.

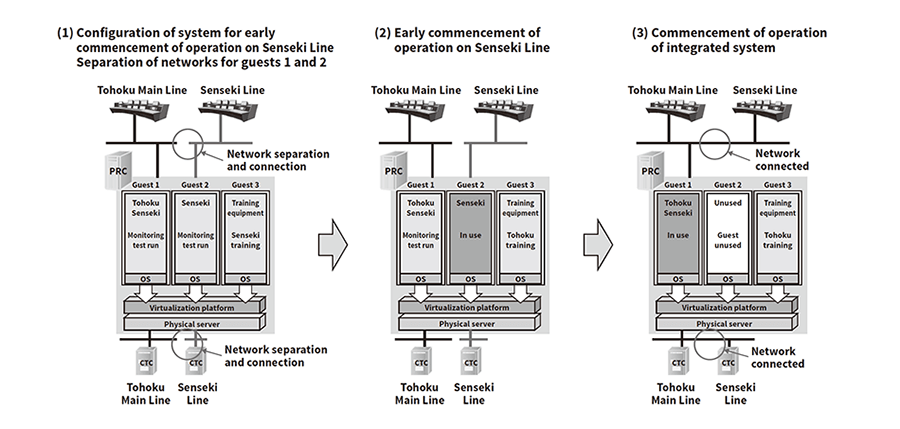

The information and control servers used for the upgrade are able to host virtual machines (“guests”) for up to three systems. These are the integrated system for the Tohoku Main Line and Senseki Line, the training system, and a system to be used for new sections of track in the future.

Whereas the previous training system lacked PRC and was only used for teaching console operation, adoption of the virtualization platform means a training environment can be provided that includes PRC and closely approximates actual operation. To minimize the switchover risks associated with the system integration, the project also included a staged switchover to the new system, with guests intended for future use being utilized to commence operation on the Senseki Line first.

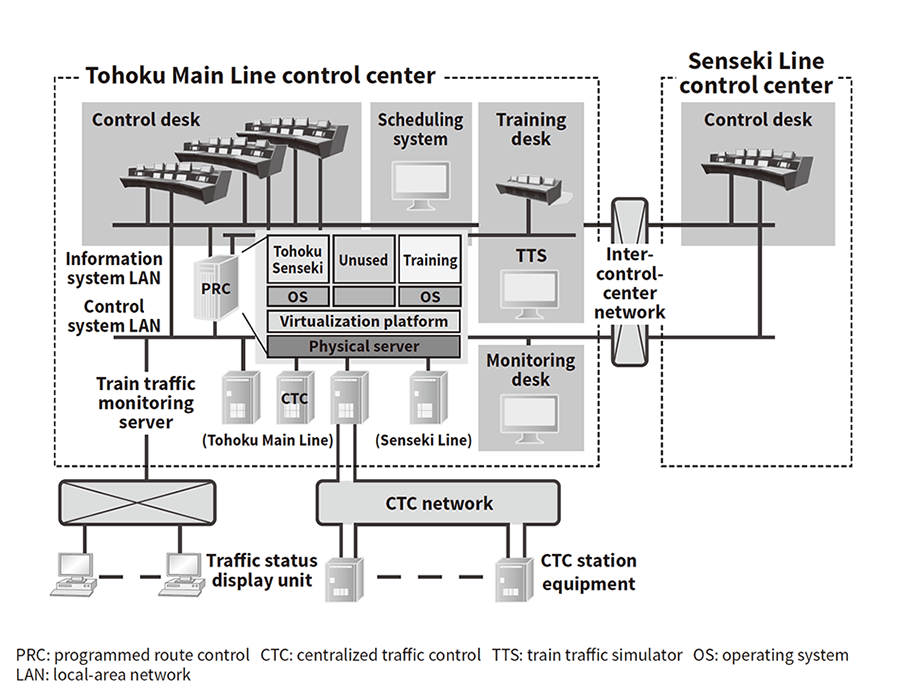

As control center operation was to continue as before, the configuration was made up of a single system with two control centers. The main equipment for PRC and centralized traffic control (CTC) was located at the Tohoku Main Line control center, with the Senseki Line control center having control desks only. These were connected via a network running between the centers (see Figure 2).

Compared to the standalone systems, system integration reduced the number of devices required for shared equipment such as the monitoring desks, scheduling system, and train traffic monitoring server that are not associated with particular sections of track, thereby saving both energy and space. Consolidating all of the key equipment in the same place also simplified maintenance.

Figure 2—Block Diagram of Traffic Management System for Tohoku Main Line and Senseki Line The CTC, control desks, and PRC are connected to the control system LAN and the control desks and PRC are connected to the information system LAN. Each LAN has a redundant dual-network configuration.

The CTC, control desks, and PRC are connected to the control system LAN and the control desks and PRC are connected to the information system LAN. Each LAN has a redundant dual-network configuration.

Use of a virtualization platform meant that the risks associated with switching over to the integrated system could be minimized by configuring the parts of the system intended for the Senseki Line on unused guests and bringing these into service before the rest of the system (see Figure 3).

Figure 3—Operation of Virtualization Guests The Senseki Line part of the system commenced operation using what will ultimately be unused guest 2 (intended for future use). This was done to minimize the risks during switchover, with it being possible to switch over to the integrated system by switching the network connections.

The Senseki Line part of the system commenced operation using what will ultimately be unused guest 2 (intended for future use). This was done to minimize the risks during switchover, with it being possible to switch over to the integrated system by switching the network connections.

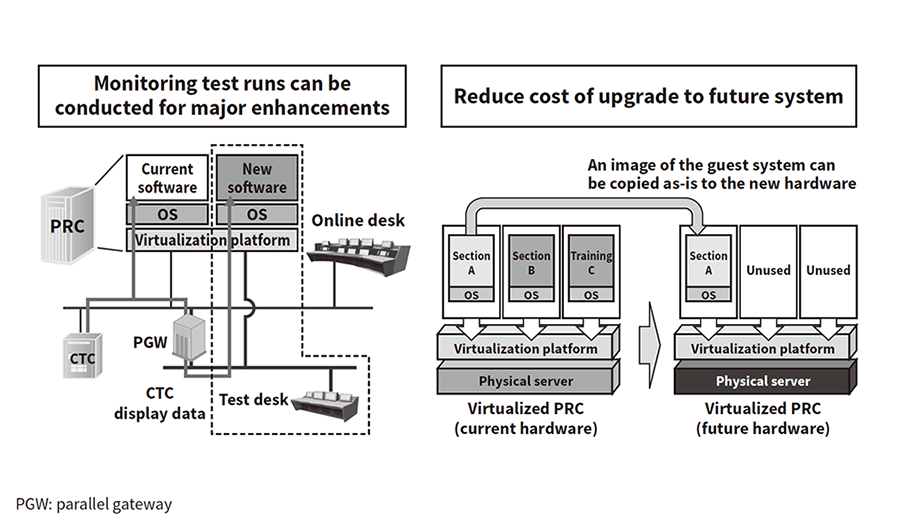

In addition to their use for future branch line additions or for other systems, unused guests can also be used for monitoring test runs during major system enhancements to reduce the cost and improve the quality of on-site testing (see Figure 4).

Furthermore, past system upgrades have required a lot of time and effort to be spent on the migration of applications as a consequence of hardware and operating system life cycles. Developing and implementing the new system on a virtualization platform, in contrast, is intended to reduce the amount of development work required when upgrading to new hardware.

Figure 4—Use of Virtualization Guests Previously unused guests can be used to conduct monitoring test runs for major enhancements. The amount of modification work required for future upgrades is reduced because applications are able to be migrated as objects.

Previously unused guests can be used to conduct monitoring test runs for major enhancements. The amount of modification work required for future upgrades is reduced because applications are able to be migrated as objects.

The realtime control performance requirements of the information and control servers include predictability (that operations are executed in the deterministic order and with predictable results), timeliness (that operations commence at the required time intervals), and low latency (the time taken for the process associated with a requested operation to actually start executing). A problem with the server virtualization used for general-purpose information systems is that it is subject to variable latency due to delays in software execution by the virtual machines. This occurs due to the conflicts that arise when multiple virtual machines want to execute simultaneously, and as a result of the server virtualization platform using software on the virtual machines to emulate access to physical hardware.

Accordingly, the realtime virtualization platform software used for the traffic management system has a resource allocation mechanism that provides virtual machines with exclusive use of the processors, disk, and network hardware they are using. It uses this mechanism to eliminate conflicts between the execution of virtual machines running simultaneously. Similarly, the latency of the software running on the virtual machines is kept within a certain level by executing this software on different processor cores to those used for the realtime virtualization platform itself. This overcomes the problem of variable latency when using server virtualization and, together with other measures for ensuring predictability and timeliness, ensures that the virtual machines deliver realtime control performance (see Figure 5).

Figure 5—Ensuring Realtime Control Performance The diagram shows the possible benefits of the resource allocation mechanism of the realtime virtualization platform. While delays in the completion of execution by software A due to reasons (1) and (2) occur in the timechart at the top-right, the execution delay for the software A becomes constant when the resource allocation mechanism is used, as shown in the timechart at the bottom-right.

The diagram shows the possible benefits of the resource allocation mechanism of the realtime virtualization platform. While delays in the completion of execution by software A due to reasons (1) and (2) occur in the timechart at the top-right, the execution delay for the software A becomes constant when the resource allocation mechanism is used, as shown in the timechart at the bottom-right.

The method used to improve availability on past information and control systems was to use multiple information and control servers (redundancy) so that continuity of execution could be retained in the event of a fault by rapidly switching over executing software to different computing hardware. Likewise, when using virtualization, high availability is achieved by adopting a redundant configuration for the computing hardware that hosts virtual information and control servers and providing the capability for high-speed switchover.

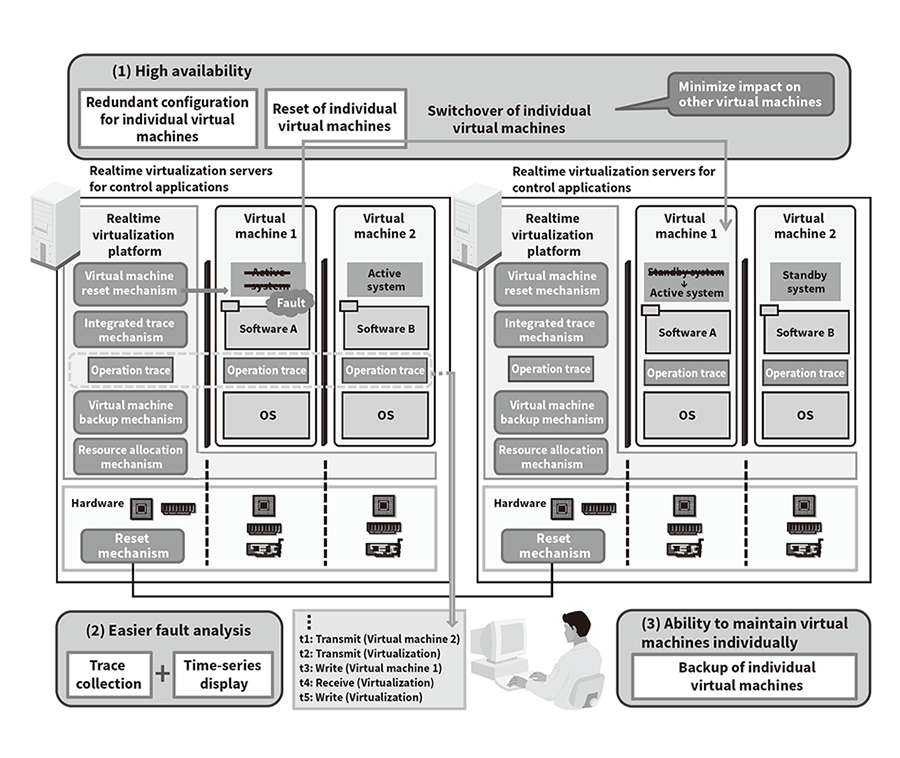

The way rapid switchover was achieved in the past was to assign a high priority to the monitoring of each system by the other so as to prevent misdetection due to execution delays. This enables fast and reliable switchover when a fault is detected by using the computing hardware's reset mechanism to shut down the faulty hardware. The realtime virtualization platform computing hardware also assigns a high priority to monitoring of each system by the other and uses the reset mechanism for rapid switchover. While there is a potential when virtual machines monitor each other for faults to be misdetected due to an accumulation of execution delays and it is not possible to shorten the monitoring times, the realtime virtualization platform achieves faster fault detection because it is configured to perform monitoring directly on the same computing hardware as the virtual machines. It is equipped with a mechanism whereby, when a fault occurs on a virtual machine, it resets that virtual machine only and switches over to the backup computing hardware so as to minimize the impact on execution by other virtual machines on the same computing hardware that do not have a fault. This provides a switchover mode whereby switchover is not performed for the other virtual machines that do not have a fault [see Figure 6 (1)].

Operationally, a common maintenance practice for preventing interference with the continuity of on-site system operation when information and control servers need to be shut down for maintenance work, such as replacing software, is for the servers to be shut down in turn, one at a time, with one of the multiple servers continuing to run at all times. To allow for this, the realtime virtualization platform has the ability to manually shut down individual virtual machines.

Figure 6—Enhancements to Availability, Fault Analysis, and Maintenance Use of the reset mechanism enables high-speed switchover, the integrated trace mechanism facilitates analysis, and the resource allocation mechanism makes for easier maintenance.

Use of the reset mechanism enables high-speed switchover, the integrated trace mechanism facilitates analysis, and the resource allocation mechanism makes for easier maintenance.

The fact that server virtualization involves running the virtual machines and virtualization platform in parallel makes fault analysis more difficult, including when execution delays occur. To deal with this, the realtime virtualization platform is equipped with an integrated trace mechanism that can present operation trace data in time-series format. This provides a unified overview of the operation of each virtual machine and of the realtime virtualization platform as well as the operation of other virtual machines, making it possible to rapidly identify the source of execution delays [see Figure 6 (2)].

As separate information and control servers are used for each control and other function in an information and control system, the job of backing up software includes making frequent system backups of these servers. Because it is likely when running multiple virtual machines that software maintenance will be needed on these individually, the realtime virtualization platform includes the ability to perform system backups separately for each virtual machine [see Figure 6 (3)].

As backups typically involve copying large amounts of data from disk to backup storage, this needs to be done in a way that does not interfere with the operation of other currently executing virtual machines. To achieve this, the resource allocation mechanism referred to earlier includes a mode that limits resource use by backup operations, including processor time and disk access bandwidth. This enables maintenance work to be performed rapidly and reliably on individual virtual machines.

This article has presented a case study of Hitachi's first ever use of a virtualization platform for a traffic management system on a conventional railway line, and described how the platform achieves realtime performance, high availability, and ease-of-maintenance.

In the future, Hitachi intends to continue adapting to new technology, reducing life cycle costs, and developing technology with the aim of supplying high-quality social infrastructure that is safe and secure.