23 January 2023

Alireza Ahrabian, PhD

European R&D Centre,

Hitachi Europe Ltd.

Rapid urbanisation and large numbers of private car ownership have resulted in significantly more congested roads, leading to ever increasing levels of pollution. Autonomous vehicles (AVs) have been proposed as a solution to alleviate congestion by providing ride sharing services to reduce the number of cars being driven on roads while transporting similar quantities of people. Furthermore, AVs have the potential to reduce the cases of yearly traffic accidents by incorporating predictable and safe driving routines that minimize the likelihood for accidents.

Vehicle localisation is a critical component in the development of autonomous vehicles, and it specifically answers the following question: Where is the vehicle on this planet? Highly accurate vehicle localisation is a critical technology in the development of responsive route planners, as well as in enabling the AV path planner to determine the trajectories that the vehicle should follow.

Current production-level vehicle localisation systems rely on global navigation satellite systems (GNSS) for accurate localisation. However, the accuracy of GNSS measurements can significantly degrade if there is no line-of-sight between the GNSS satellites and vehicle receiver, such as in urban canyons. To this end, my colleagues and I decided to improve the robustness of GNSS systems by using optical cameras, as reported in [1]. In particular, we first propose to estimate a-priori the likelihood of regions that are GNSS denied by proposing a digital surface model that computes the GNSS satellites’ visibility (developed by our collaborator). If a region is categorised as GNSS denied, we propose a low-cost camera-based localisation system as a replacement for the GNSS measurements in the GNSS denied region; while for GNSS available regions, we determine global vehicle location using standard GNSS measurements.

In this work, an approximation of line of sight between the vehicle and satellite is determined by calculating the view of sky that is the part of space that is not blocked by buildings, vegetation, and the topology of the landscape. A digital surface model [1] is used to calculate the line of sight to the sky that is blocked (or unblocked) (see Figure 1). The calculated visibility of sky score is an a-priori estimate of GNSS availability; the higher the score the higher the likelihood that GNSS is available [1].

Figure 1. Tracing blocked line of sight from vehicle to buildings, trees, and other structures that block the view of sky.

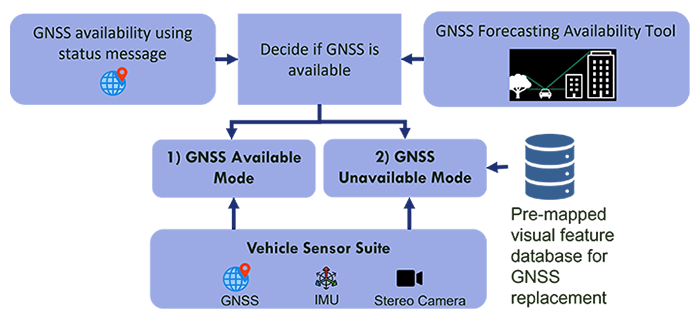

Our proposed localisation system has two primary components: 1) Map Generation; and 2) Online Localisation. The map generation component effectively generates a high-definition visual feature map along the trajectory of a GNSS denied route such that the online localisation component can continuously and accurately localise. Figure 2 shows our proposed hybrid online localisation system that is informed by GNSS availability. The proposed online localisation system has two primary modes of operation: 1) GNSS available mode; and 2) GNSS unavailable (denied) mode.

Figure 2. Proposed hybrid visual/GNSS based localisation (geotagged visual localisation)

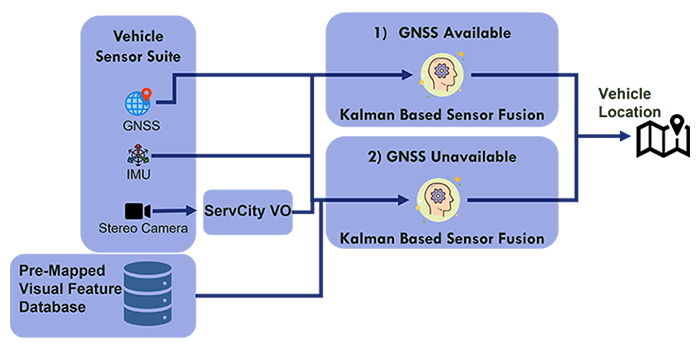

For both modes of operation, a sensor fusion scheme is employed that is based on the extended Kalman filtering framework. In particular, multiple relative pose (the vehicle position and orientation change relative to its previous position/orientation) and absolute pose (the position/orientation of the vehicle in the world) measurements are fused in order to produce more accurate estimates of vehicle pose. Figure 3 illustrates the modes of operation for the proposed online localisation system, where relative pose measurements are generated from inertial measurement unit (IMU) and visual odometry (VO), while global pose measurements are generated from either: 1) GNSS (GNSS available mode), or 2) measurements based on pre-mapped visual features (GNSS unavailable mode).

Figure 3. Proposed hybrid online vehicle localisation informed by GNSS availability

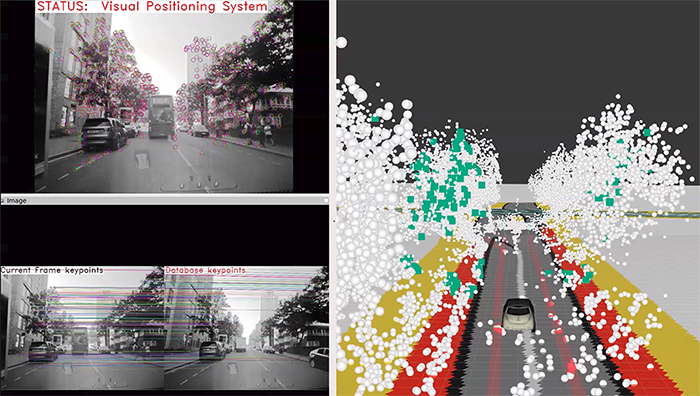

The full system demonstration used the GNSS availability forecasting tool along with the geotagged visual localisation system in order to overcome GNSS degradation along a test route in Woolwich, London. In particular, the GNSS availability tool was used to identify a likely GNSS degraded route. Once the route was determined, a visual feature map of the route was generated. Our tests consisted of driving through the area determined to be GNSS denied and switching between GNSS available and GNSS unavailable modes of operation. We found that our system was able to maintain good lateral accuracy (lateral error of approximately 10cm) when driving through the GNSS denied region. This can be further seen from Figure 4 where the estimated pose of the vehicle (shown in the right panel of Figure 4) is aligned with lane markings of a high definition (HD) map.

Figure 4. Top left: 1) the status of the geotagged visual localisation system and visual features (keypoints) used in the visual odometer; bottom left: 2) the matched visual features used by the visual positioning system to estimate an accurate vehicle pose; right panel: 3) the current vehicle position shown on an HD map and the 3D position of pre-mapped visual features.

We have demonstrated that by using digital surface modelling of GNSS availability, we can obtain a potentially scalable solution for identifying routes that need to be pre-mapped for more accurate localisation. We further demonstrated that by capturing visual features and storing them along GNSS denied routes, we can generate robust and accurate estimates of the vehicle’s location.