(April 3, 2017)

chapter 1

Kazuo Yano Corporate Chief Scientist, Research & Development Group, Hitachi, Ltd.

AI is such a popular topic, it seems like every day we see "New Invention" or "New Collaboration" in the newspapers. Among these headlines are many fantastic or scary stories about people's jobs being taken over by machinery, or the Singularity allowing machines to create other machines, and putting human beings under their control. I think these "fantasies" are quite unhealthy.

What AI can do and what AI can't do, are clearly defined. The word "AI" has taken on a very broad meaning. When word processors first came out, their ability to convert one Japanese writing system to the other was referred to as "AI conversion." When remote controls for TVs became "intelligent" enough that they could change the channel with just the press of a button, this was known as "AI television." When calculators got more advanced functions, they were called "AI calculators." The word "AI" has been applied haphazardly for quite some time.

In reality, there is only one thing that is important. That is the function known as "supervised learning."

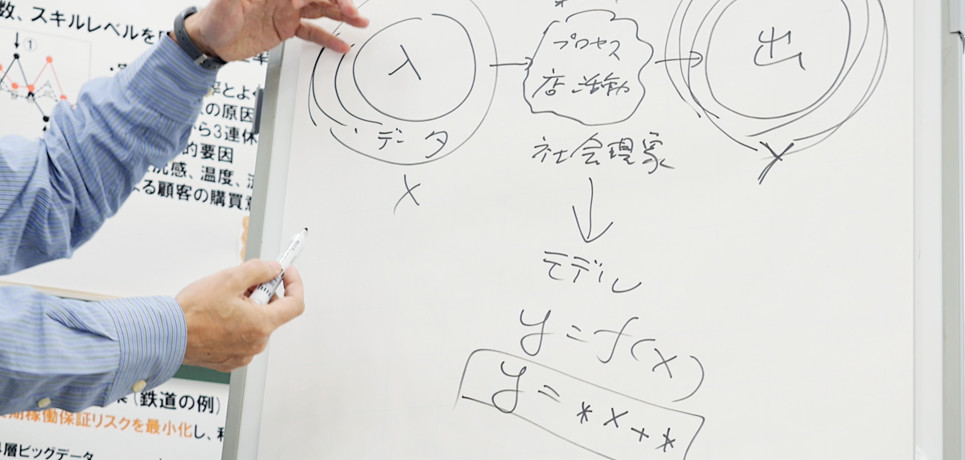

There's data at the entrance, and here (in the central part), there's some kind of process. This might be for business, or it might be a social phenomenon. One concrete example would be the daily activities of a store. Then, you get an output. There's quite a lot of this "input" data, right? (If we calculate that,) we'll get a result, like "this is how much we sold over the course of a day."

If we pair up the data showing various phenomena or conditions with the numbers that are their results, we get a simple y=f(x)-style model, which can give us a vague sense of the intermediary process. Most simply, this could be derived from something likey=*×x+*. If we enter a large amount of data, with "voice" paired up at the entrance with "What words were actually said" at the exit, this is called "voice recognition." If we put in a lot of data with "photographs" at the entrance paired up with "a picture of a cat" or "a picture of a person's face" at the exit, this is known as "image recognition."

The important point is putting in large volumes of paired "input data" and "output data," and automatically extrapolating relationship models which connect the two. This is known as "supervised learning." The reason AI has been able to do so much more than before, is that its supervised learning capacity has improved dramatically.

Previously, our methodology involved creating an equation based on the input and the process, and plugging x into the equation to figure out the result. Or, alternatively, taking the deductive approach of studying phenomena, physics and sociology, and making a prediction.

It is now possible to use AI to enter both the conditional data at the entrance, and the results, and determine the logic between the entrance and exit, even if we don't already know the intermediary process. With just this advancement, we can put in voice or images, like a game board for go, and the results of "won or lost game," "how many squares have been won," and by inputting a lot of data like recorded games and various opponents, we can deduce how to win. Of course, this would not be possible with only voice recognition, image recognition, and automatic translation. But with human support, the possibilities have increased.

With these processes made possible, the most important thing will be the quantitative effect on business administration, or in other words the economy. We can input the kind of numbers that we want to increase as a business, and the data that goes along with them. And by inputting both of these, we will be able not only to logically predict things like "Under what conditions can we achieve this result," but to extrapolate it from previous data. We have achieved a methodology that will allow us to engage more systematically with societal and human issues that, unlike physics, are not readily solvable by equations.

Hitachi, Ltd. has been working to solve these issues for a very long time. Hitachi AI Technology/H is Hitachi's artificial intelligence specialized for making the most impact in the question of how best to improve the numbers of a business. What makes it technologically special is that by providing, for example, the sales and profits of one day of a business branch as the exit, the entrance would have an enormous amount of many types of data, and it would not be possible to match them individually, as it would with the relationship between the photograph and the cat in the "supervised learning" example. In "supervised learning," the constraints are too rigid to be useful for the purpose of improving business results.

What makes Hitachi AI Technology/H unique is its ability to deal with problems not on a "one-on-one" basis, but on a "one-on-many" basis. This is necessary to increase business results, and Hitachi has already secured a patent for this technology, known as "leap learning." It is already being tested in real situations for many business clients. For example, when it automatically optimized shipping warehouses, raising their productivity by 8%, or when it optimized worker placement in a store, leading to a 15% increase in sales per customer. It has also reduced the amount of electricity used by a railroad company in simulations. It is being used in securities companies to decide the optimal pricing from data when lending stocks. It's even being used in shipping, so that packages make it to their destinations right on time.

Hitachi AI Technology/H is being put to use in these diverse applications to solve all of the problems faced by businesses, but there are conditions: Clearly defining numerical results, and inputting all of the relevant source data. It is also necessary for a human to teach the AI where to create feedback. We believe that in this way, we have created the world's leading "multi-purpose AI," useful in many different situations.

Kazuo Yano, Dr. Eng.

Corporate Chief Scientist, Research & Development Group, Hitachi, Ltd.

Joined Hitachi, Ltd. in 1984. In 1993, he achieved the world's first successful operation of single-electron memory at room temperature. Since 2004, he has taken the lead in wearable technology, as well as the collection and utilization of big data. His papers have been cited 2500 times, and he has 350 patent applications. The wearable sensor he developed, known as the "Business Microscope," has been described by the Harvard Business Review as a "historic wearable device." He is known for his specialist breadth and depth from AI to nanotechnology. His literary work, "Invisible Hand of Data: The Rule for People, Organizations, and Society Uncovered by Wearable Sensors" (Soshisha Publishing), was elected one of BookVinegar's 2014 10 Best Business Books. Dr. Yano has a doctorate in engineering, he is an IEEE Fellow, a visiting professor at the Tokyo Institute of Technology, and a member of the Ministry of Education, Culture, Sports, Science and Technology's Information Science and Technology Committee. He has been awarded many international awards, including the 2007 MBE Erice Prize, and Best Paper at the 2012 International Conference on Social Informatics.

(As at the time of publication)