6 November 2020

Yuki Inoue

Research & Development Group, Hitachi, Ltd.

Hiroto Nagayoshi

Research & Development Group, Hitachi, Ltd.

Many developed countries are facing the challenge of a shrinking labor force and as a result, a decrease in the number of available experts. This has increased the need to automate labor intensive tasks in industry. Operation and maintenance tasks are no exception. Hitachi Systems, Ltd., started offering an inspection service utilizing drones and AI in 2018 to address this shortage in Japan [1,2]. We collaborated in the development of automatic crack detector technology which is a key feature of this service. In this blog, we’d like to outline the technology we developed.

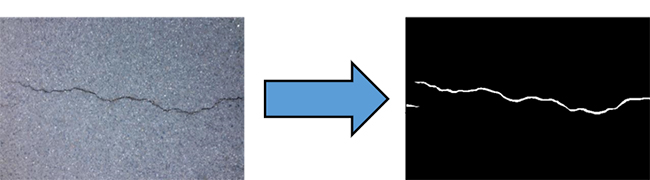

The crack detection problem can be formulated as a semantic segmentation problem - for each pixel in an image, the model predicts whether it is a crack or not. An example of a prediction process is illustrated below:

Figure 1: Illustration of the crack detection problem

The model takes an RGB image as an input, and outputs a crack probability map.

With recent advancements in artificial intelligence, there are many crack detectors that are very accurate. However, it is often difficult to deploy those detectors in the field because their AI models are designed to optimize only for accuracy and are unfriendly for use in a real-world environment. To fill this gap, we decided to focus on designing a crack detector that was practical for real-world deployment.

Below, we’ll share one of the ways in which we made our detector deployment friendly, and extended the AI model’s capability for crack orientation prediction. As crack orientation is one of the crucial factors that determines how dangerous the cracks are in the assessment, orientation prediction is important for real-world deployment.

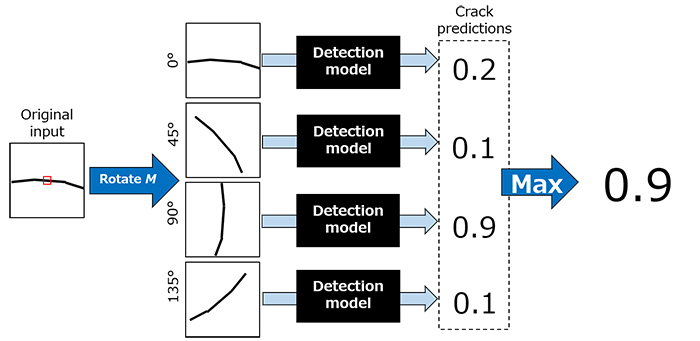

Fig. 2 shows the overall architecture of our network. It is based on a Multiple Instance Learning [3] architecture. The black boxes labeled “Detection model” represent 7-layer convolutional neural networks, and they all share the same weight. The inputs to these replicated models are the rotated versions of the input image, and the models each output a probability map. In the figure, there are four detection models, so we have four outputs. The outputs are combined with a maximum operation for the final output. This architecture grants the model a weak form of rotational invariance, which is crucial since cracks are rotationally invariant.

It turns out that this architecture can be used to predict crack orientation. First, we train the model with two novel losses so that the model can only detect cracks of specific range of orientations. Then, we identify how much rotation was applied to the image during inference. Using these two pieces of information, we can predict the range of crack orientation, up to 180o/M, where M represents the number of inference paths. An advantage of this method is that only a few orientation annotations are needed because the model is capable of learning crack orientation from crack location annotation.

Figure 2: Overall architecture based on Multiple Instance Learning

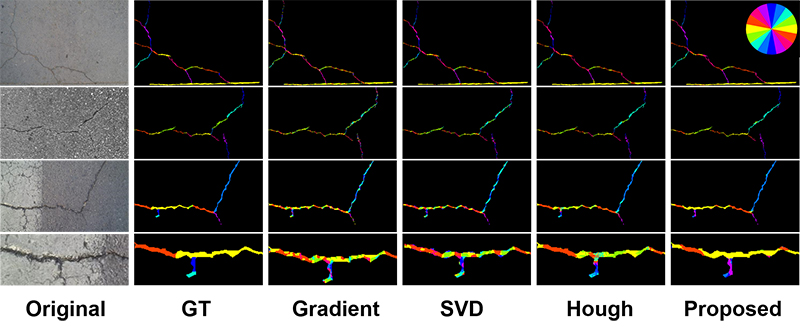

Fig. 3 shows some examples of the orientation prediction. The orientation at each pixel is indicated by colors corresponding to the color wheel on the top right of the figure. As can be seen, the samples for the proposed method match better with the ground truth and are more consistent.

Figure 3: Qualitative evaluation of the orientation prediction

As inspection processes continue to be automated, the detection models not only need to be accurate, but also easily deployable. This is especially critical for guaranteeing safe and accurate inspection process in the world of aging population, in which the number of working population as well as experts are decreasing. In this blog, we focused on crack orientation prediction, which is one of the many efforts to make our crack detector more deployment friendly. Though it was only a brief description, we hope that you are now just as excited as we are on deployable crack detectors! For more details, we encourage you to read our paper.