New deep-learning-based AI model to deeply understand images

July 27, 2021

Hitachi has developed a new deep-learning-based algorithm for the task of human-object interaction detection, which can use not only humans and objects locations but also predict their co-relations in an image, achieving state-of-the-art performance*1. Due to the limited capability of extracting features, conventional methods cannot fully leverage information in an image to predict interactions between objects. The developed technology overcomes this problem by automatically finding the feature regions that represent interactions among objects. The regions are those of not only a target human and object but also related objects, and can be found even when they are distantly located in an image. As a result, the detector with the developed technology achieves high-speed and high accuracy. This technology is going to be presented at CVPR*2 2021, a top-tier international computer vision conference. We will apply this technology to a broad range of services and realize a safe and secure society.

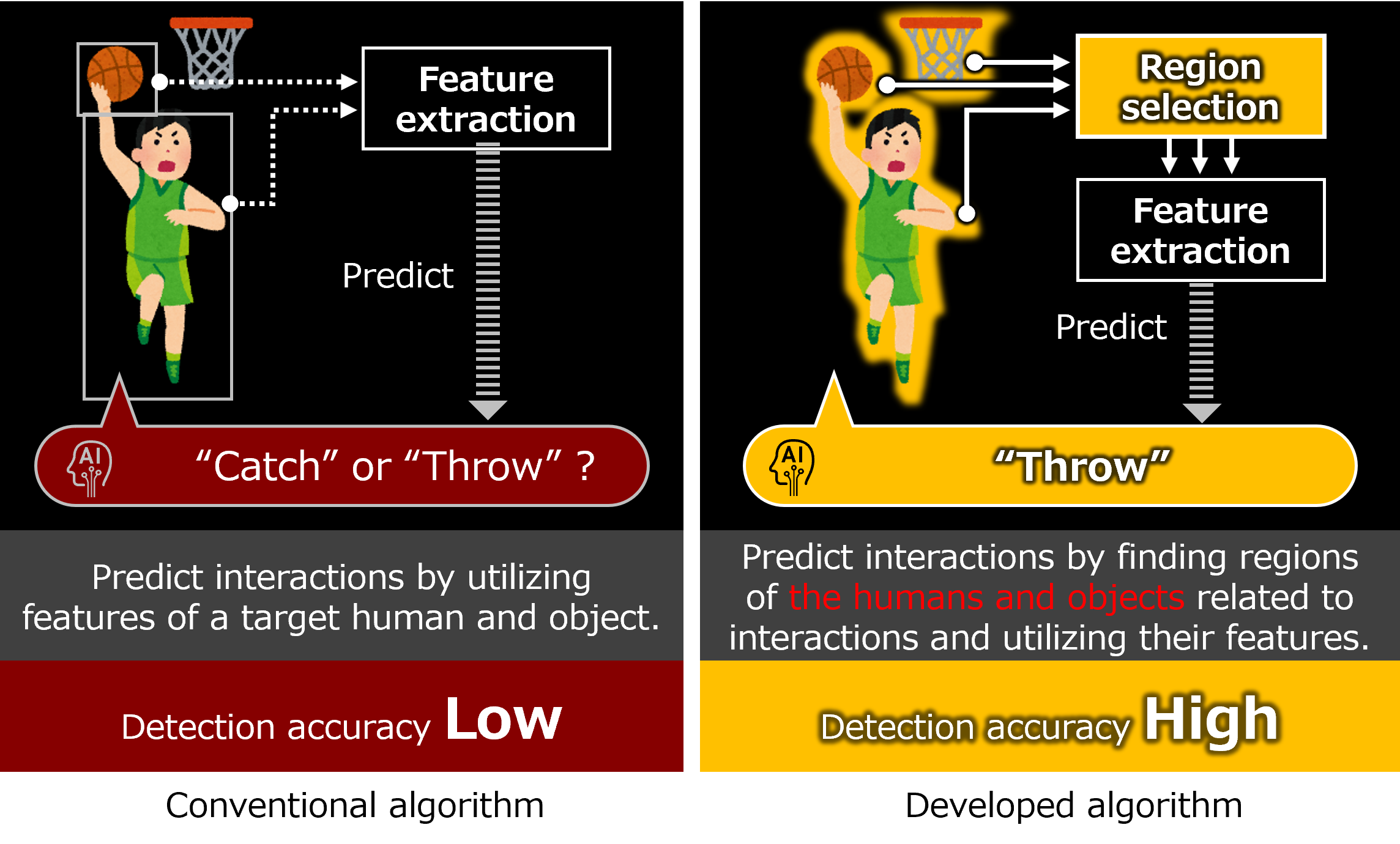

Figure 1 Comparison of the Conventional Method and Proposed Method.

A deep-learning-based human-object interaction detector. This detector leverages an object detector that can comprehensively identify what and where the objects are based on their relation in an image. The features of relationship information scattered image-wide can be selectively aggregated and used to predict interactions.

By drawing attention maps which indicate the regions that the detector sees to predict interactions, we confirmed that the developed detector can find the regions of features for objects related to interactions even when they are not a target human and object, and distantly located. We compared the developed method with state-of-the-art human-object interaction detection methods and confirmed that the developed method outperformed the other methods.

A part of this technology will be presented at CVPR 2021 held from June 19 to June 25 , 2021.

The submitted paper, ‘QPIC: Query-Based Pairwise Human-Object Interaction Detection with

Image-Wide Contextual Information’ is published on arXiv.

For human-object interaction detection, it is crucial to retrieve appropriate information to predict interactions from large amounts of information in an image. Based on the experience of developing an AI-based high-speed image retrieval algorithm, Hitachi assumed that an object detector that can utilize the relationship of objects in the image to recognize what and where they are is effective in human-object interaction detection. We therefore built a deep-learning-based human-object interaction detector that can utilize features of non-target but related objects and distantly located objects based on the technology. Figure 1 above shows an advantage of the developed technology. Our detector can extract features of the basketball goal in addition to the target human and object, resulting in increasing the detection accuracy.

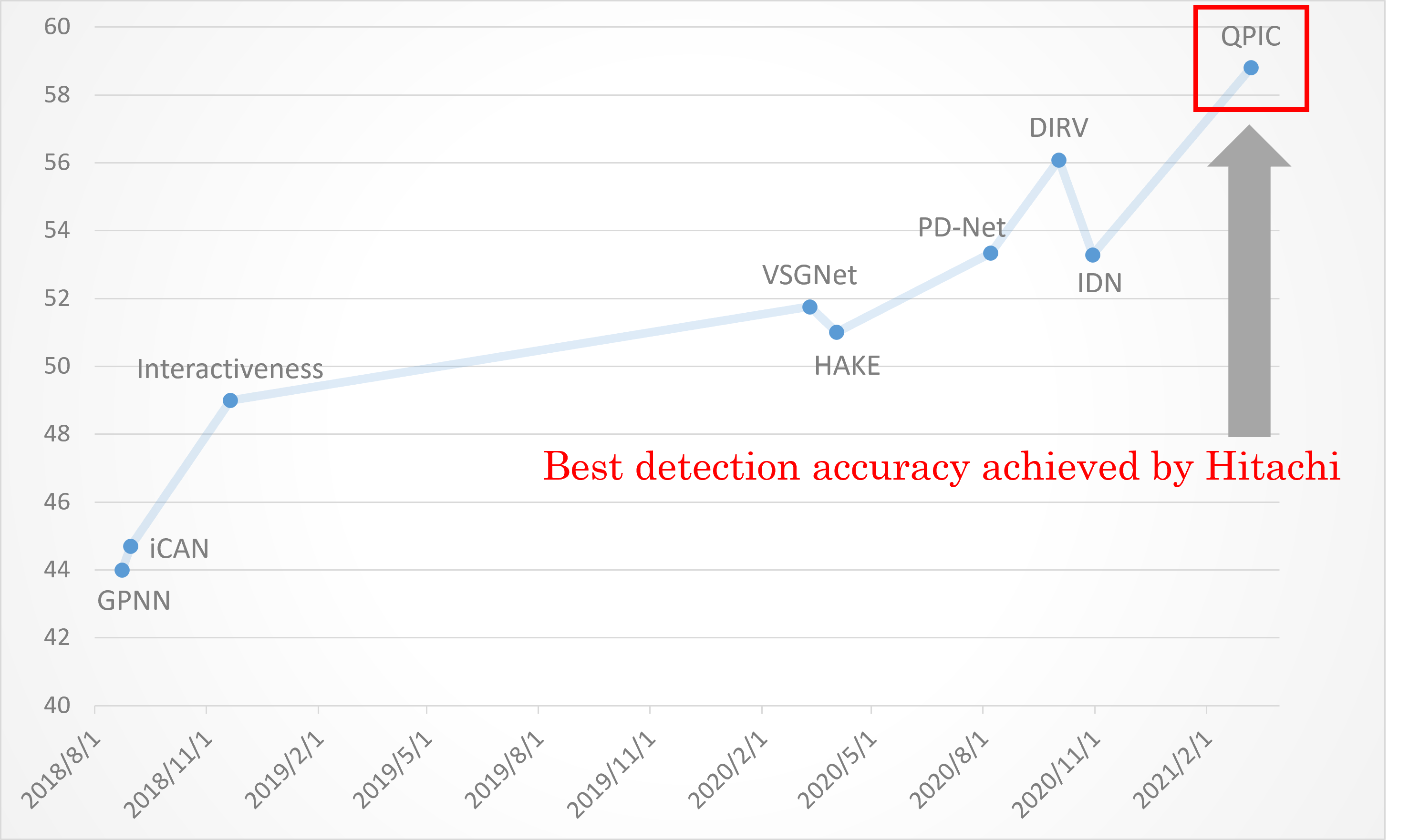

By using this technology, our detector achieved detection accuracy of 29.9% on one of the popular benchmark datasets, HICO-DET, a 20% improvement from conventional state-of-the-art performance. In addition, as shown in Figure 2, our detector achieved the best performance worldwide with a detection accuracy of 58.8% on another famous benchmark dataset, V-COCO (as of April 7, 2021).

Figure 2 Performance Improvement in Detection Accuracy Under One of the Famous Benchmark Datasets, V-COCO*1

(Prepared by Hitachi Using the Information of Paper With Code)

The developed image recognition technology is expected to be applied to a broad range of services such as surveillance, marketing, and sports spectating.

Hitachi is promoting research and development for social innovation while complying with the principles of AI ethics. This technology is developed in accordance with said principles.

For more information, use the enquiry form below to contact the Research & Development Group, Hitachi, Ltd. Please make sure to include the title of the article.